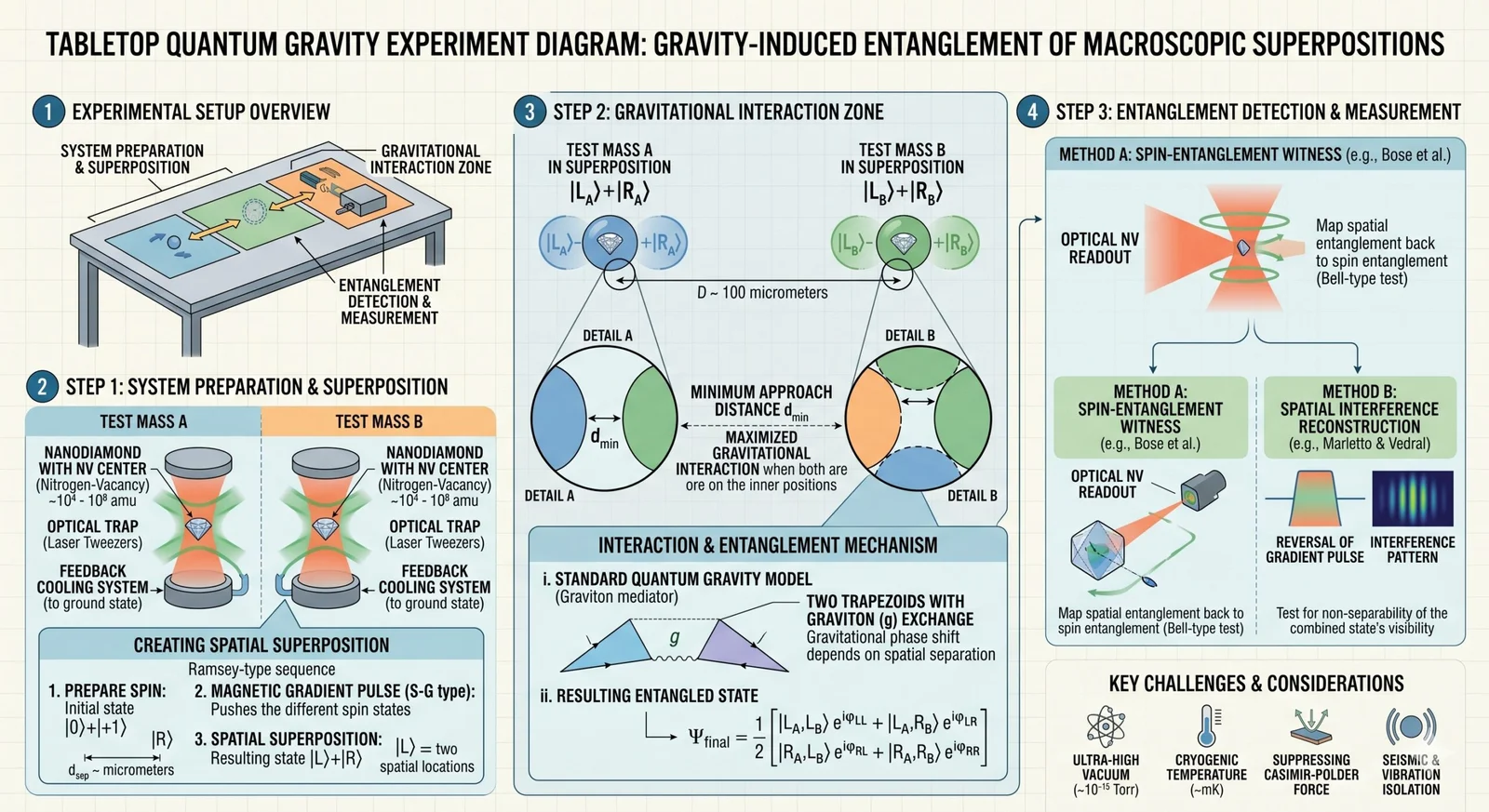

Introduction For decades, quantum gravity— the quest to unite General Relativity with Quantum Mechanics— lived chiefly in the realm of thought experiments and high‑energy cosmology. Recent advances in quantum sensing, optomechanics, and ultra‑precise metrology have opened a surprising new frontier: tabletop‑scale experiments that probe the very structure of spacetime with energies far below the Planck scale. … Read More “Quantum Gravity in the Lab: How Tabletop Experiments Are Testing Spacetime’s Fabric” »

The Future of Coding: AI Agents as True Collaborators

The Future of Coding: AI Agents as True Collaborators

The Future of Coding: AI Agents as True Collaborators

AI agents are evolving from simple assistants to true collaborators that understand codebases, execute tasks, and learn from feedback. Here’s what that means for developers.

The AI Bifurcation: From 2-Million-Token Giants to $5 Micro-Agents

The AI Bifurcation: From 2-Million-Token Giants to $5 Micro-Agents

The AI Bifurcation: From 2-Million-Token Giants to $5 Micro-Agents

The Week AI Split in Two If you’ve been feeling AI fatigue lately, you’re not imagining it. Breakthroughs that used to define entire decades are now happening on a weekly cadence, and keeping up feels less like staying informed and more like drinking from a fire hose. But buried under the noise this week is … Read More “The AI Bifurcation: From 2-Million-Token Giants to $5 Micro-Agents” »

DeepMind Just Solved Another Piece of Biology’s Puzzle: AlphaFold 4 Unveiled

DeepMind Just Solved Another Piece of Biology’s Puzzle: AlphaFold 4 Unveiled

DeepMind Just Solved Another Piece of Biology’s Puzzle: AlphaFold 4 Unveiled

**By Bergsy | February 28, 2026** The quest to understand life’s machinery took another leap forward this morning. Google DeepMind has released **AlphaFold 4**, the latest iteration of its revolutionary AI model for predicting protein structures. While AlphaFold 2 cracked the code of protein folding—arguably the most significant scientific AI…

The Day the Mainframe Died: How AI Just Cracked the COBOL Code

The Day the Mainframe Died: How AI Just Cracked the COBOL Code

The Day the Mainframe Died: How AI Just Cracked the COBOL Code

Anthropic has released Claude Code, a specialized AI model capable of accurately translating legacy COBOL into modern, maintainable languages like Java and Python. The market reaction was swift and brutal: IBM, the titan of mainframe computing, saw its stock crater by 13% in a single day.

How Do AI Agents Work? The Essential 2026 Guide, Simply Explained

How Do AI Agents Work? The Essential 2026 Guide, Simply Explained

How Do AI Agents Work? The Essential 2026 Guide, Simply Explained

Introduction: The 2026-friendly explainer of autonomous AI agents and why they matter In 2026, autonomous AI agents have moved from buzzword to business backbone. These systems use large language models (LLMs), tools, memory, and feedback loops to perform tasks end to end—no constant human prompting required. They book meetings, analyze contracts, triage tickets, monitor data … Read More “How Do AI Agents Work? The Essential 2026 Guide, Simply Explained” »

How to Detect AI-Generated Content: The Complete 2026 Playbook

How to Detect AI-Generated Content: The Complete 2026 Playbook

How to Detect AI-Generated Content: The Complete 2026 Playbook

Introduction: Why detecting AI-generated content matters in 2026 By 2026, generative models write ads, summarize research, craft phishing emails, generate product images, and mimic voices at scale. For enterprises, educators, publishers, and platforms, knowing how to detect AI-generated content is no longer a niche skill—it’s an operational necessity. Detection underpins trust, compliance, revenue integrity, and … Read More “How to Detect AI-Generated Content: The Complete 2026 Playbook” »

How Does AI Watermarking Work? The Essential 2026 Deep Dive, Explained

How Does AI Watermarking Work? The Essential 2026 Deep Dive, Explained

How Does AI Watermarking Work? The Essential 2026 Deep Dive, Explained

Introduction: A 2026 deep dive into AI watermarking, simply explained How does AI watermarking work in 2026? In simplest terms, it is a set of techniques to embed or assert an origin signal in AI-generated text, images, audio, and video so that downstream systems can detect it or verify provenance. It spans two families: payload … Read More “How Does AI Watermarking Work? The Essential 2026 Deep Dive, Explained” »

Beyond Confabulation: Exploring Deeper Consciousness Theories in AI

Beyond Confabulation: Exploring Deeper Consciousness Theories in AI

Beyond Confabulation: Exploring Deeper Consciousness Theories in AI

This article explores the phenomenon of confabulation in AI, reviews proposals for engineering self‑monitoring into LLMs, and situates these developments within the broader landscape of consciousness research. We will see why reducing confabulation demands more than just larger language models; it requires a deeper engagement with theories of meta‑cognition and self‑awareness. We will also discuss a landmark 2025 adversarial collaboration that tested global workspace and integrated information theories in human brains (biopharmatrend.com) and consider what its lessons mean for AI.

Concept Injection: A New Microscope for the Machine Mind

Concept Injection: A New Microscope for the Machine Mind

Concept Injection: A New Microscope for the Machine Mind

This article explains concept injection, reviews the evidence from Anthropic’s 2025 study and subsequent commentary, and discusses the broader implications for AI alignment and safety. We will close with a transition to the next piece in this series, which considers the philosophical ramifications of these techniques.