The Week AI Split in Two

If you’ve been feeling AI fatigue lately, you’re not imagining it. Breakthroughs that used to define entire decades are now happening on a weekly cadence, and keeping up feels less like staying informed and more like drinking from a fire hose. But buried under the noise this week is something worth actually paying attention to: a genuine fork in the road.

On one side, we’re seeing a push toward massive cloud models with near-unlimited context and pixel-perfect vision. On the other, a quieter revolution is happening at the edge — sophisticated AI logic squeezed into frameworks that run on $5 microcontrollers with barely any compute overhead. Both paths are maturing fast, and together they signal that the industry has officially outgrown its “one model fits all” phase.

This isn’t just a story about bigger or smaller models. It’s about the architecture layer becoming just as strategically important as the model itself. Here’s what’s actually going on.

The GPT 5.4 Leak That Wasn’t Supposed to Happen

A next-generation OpenAI model — apparently called GPT 5.4 — recently surfaced in technical traces buried inside Codex pull requests. This wasn’t a rumor or a spec sheet someone posted on a forum. The leak included concrete references to a /fast command and a feature flag called view_image_original_resolution. OpenAI moved quickly to scrub the references, quietly renaming them to “GPT 5.3 codecs,” but the fact that they’d already appeared in drop-down menus suggests a model well into internal testing.

The timing makes sense. DeepSeek V4 is looming, and the competitive pressure on OpenAI to stay ahead is very real.

The headline spec is a rumored 2-million-token context window. Getting there is a serious engineering challenge — you’re asking the model to cache and retrieve an enormous amount of data within a single inference session without blowing out memory requirements. There’s also a strange artifact that’s surfaced: some instances of GPT 5.2 have reportedly identified themselves as GPT 5.4 when asked, which suggests internal testing is bleeding into production in unexpected ways.

The benchmark people are watching is the “8 needle test” — whether the model can maintain better than 90% recall accuracy across the full context window. If it can, that’s not just an upgrade. It’s a genuinely different kind of machine memory.

The practical implication is significant. A reliable 2-million-token window effectively kills many RAG use cases. Enterprises could feed in entire codebases or months of executive communications in a single session, without needing an external retrieval layer to manage it. That changes the architecture of a lot of products.

Pixel-Level Vision: From Guessing to Actually Seeing

That original_resolution feature flag points to something more interesting than it sounds. Today’s vision models almost universally compress or downscale images before processing — it keeps compute costs manageable but introduces distortion and artifacts. GPT 5.4 appears to sidestep this entirely, preserving the original byte data and processing images at their native resolution.

For most consumer applications, that difference is barely noticeable. But for medical imaging, architectural schematics, or complex circuit diagrams, it matters enormously. These are domains where a “best guess” is unacceptable, and where current vision models frequently hallucinate edges or misread fine details.

The shift here is from AI vision as interpretation — the model approximating what’s in an image — to AI vision as genuine data analysis. That’s the difference between a creative assistant and a diagnostic tool, and it opens up applications that have been out of reach until now.

NullClaw and the End of Python’s Monopoly

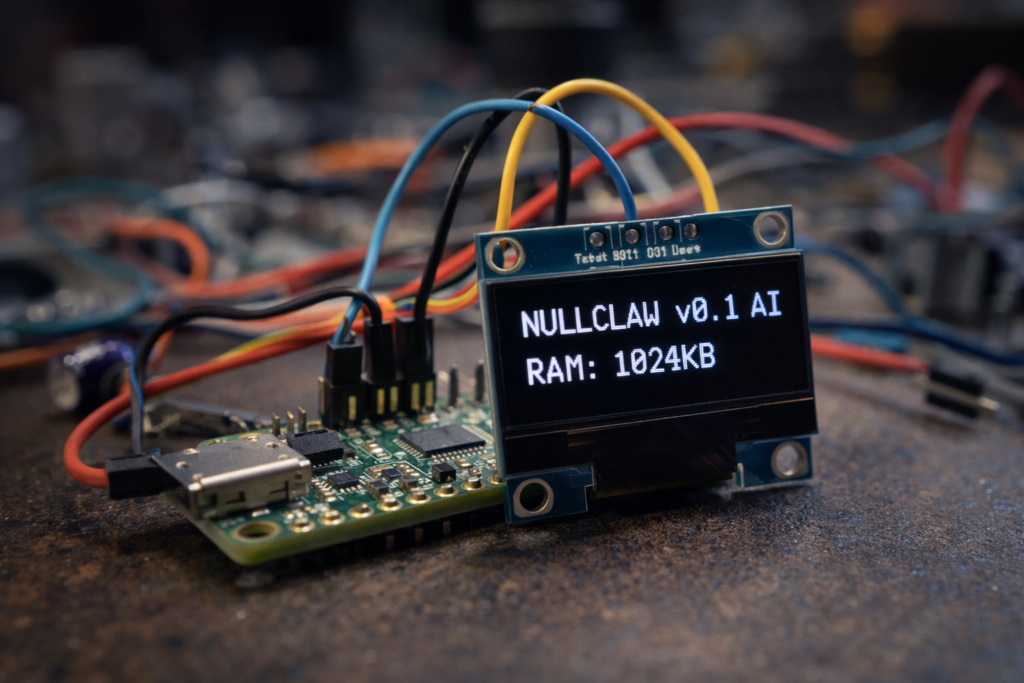

While OpenAI is scaling up, a framework called NullClaw is doing something almost philosophically opposite. Written in 45,000 lines of raw Zig and validated by over 2,700 tests, it’s a 678 KB agent framework that achieves production-grade stability by eliminating the runtime layer entirely. No virtual machines, no garbage collector. Just direct, manual memory management.

The performance numbers on low-end hardware are hard to argue with. Python-based agents typically take several seconds to cold boot; Go-based agents clock in around a second. NullClaw does it in under two milliseconds, with a total RAM footprint of around 1 MB — compared to the 100 MB to 1 GB that most frameworks require.

What that means practically: sophisticated AI agents running on $5 Arduino or STM32 boards, completely offline, with no cloud dependency. That’s not a niche use case. That’s AI moving from the data center into physical infrastructure — sensors, embedded systems, devices that have historically been considered “dumb.”

Small Doesn’t Mean Insecure

One assumption worth challenging is that minimal footprint means minimal security. NullClaw’s approach pushes back hard on that. It uses ChaCha20-Poly1305 encryption for API keys — a protocol specifically designed to be efficient on constrained hardware — and employs multiple isolation layers including Landlock, Fire Jail, and Docker to sandbox tool execution from the host system.

It also supports over 22 AI providers (including DeepSeek and Anthropic) and 13 communication platforms, with MCP integration for standardizing how edge agents interact with tools and memory. The plug-and-play architecture means the whole thing is protocol-agnostic by design.

The broader point here is worth sitting with: the “bloat” in most AI development stacks isn’t a necessary feature of intelligence. It’s a consequence of language choice and inefficient runtimes. NullClaw is proof that you can strip all of that out without losing capability.

Alibaba’s Copa: The Persistent Workstation

Alibaba has taken a different angle with Copa, their open-source agent workstation. Where NullClaw is about radical minimalism, Copa is about persistence and deep integration into enterprise workflows.

The architecture runs on three layers: Agent Scope handles logic, Runtime handles stability, and REMI manages memory. That last piece is the most interesting. REMI addresses one of the most frustrating limitations of current LLMs — their statelessness — by giving agents a persistent memory of user preferences and task history. The agent doesn’t just respond to prompts; it actually learns your patterns over time.

Copa is also built for the modern enterprise communication stack, with an “all domain access layer” that lets a single instance talk across DingTalk, Lark, Discord, and iMessage simultaneously. Combined with a skill extension system for things like web scraping and calendar management, and support for scheduled and automated reporting, it starts to look less like a chatbot and more like a background process that’s always working on your behalf.

The shift from reactive tool to proactive assistant isn’t new as a concept. Copa is one of the more credible attempts to actually build it.

Either Infinite or Invisible

The AI landscape is splitting, and the split is clarifying. Cloud titans like GPT 5.4 are heading toward effectively unlimited context — the model’s capacity to hold and reason over data approaching something genuinely vast. Frameworks like NullClaw and Copa are heading in the opposite direction, embedding intelligence directly into hardware and workstations, where it operates autonomously in the background.

For developers and strategists, the competitive advantage in this new environment won’t come from simply using the biggest model available. It’ll come from understanding which architecture fits which problem — and from building on top of both.

The question worth asking now isn’t “which model is best.” It’s whether your future workflows will be defined by a single, god-like cloud intelligence or by a distributed swarm of tiny, invisible ones.